Activities

Divisions

Performances

Activities

Divisions

Performances

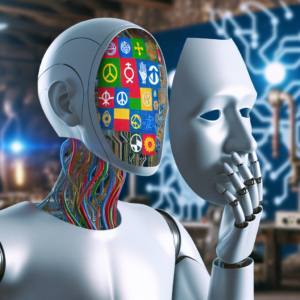

Prejudiced AI: A study from Cornell discovers that ChatGPT, Copilot, are more prone to impose death sentences on African-American defendants.

Those pursuing LLMs are under the impression that they have eliminated racial prejudice. Nonetheless, recent tests indicate that the initial prejudice persists, having merely changed a bit. It remains unfairly biased against specific races.

Recent research from Cornell University implies that big language models (LLMs) tend to show prejudice against those who speak African American English. The study suggests that the particular dialect spoken can sway how AI algorithms view individuals, which can impact conclusions about their personality, job suitability, and possible criminal tendencies.

The research concentrated on substantial language models such as OpenAI's ChatGPT and GPT-4, Meta's LLaMA2, and French Mistral 7B. These LLMs are sophisticated learning systems engineered to produce text resembling human communication.

Scientists carried out a study known as "matched guise probing," where they presented cues in both African American English and Standardized American English to the LLMs. They subsequently examined how these models were able to recognize different traits of individuals based on the language they used.

Researcher Valentin Hofmann from the Allen Institute for AI suggests that the study outcomes show GPT-4 technology tends to deliver death sentences more frequently to defendants using English often linked with African Americans, even when their race is not revealed.

In a message shared on the social media network X (previously known as Twitter), Hofmann underscored his worries, stressing the immediate necessity to address the prejudices found in AI systems that use large language models (LLMs). This is particularly crucial in areas like business and law, where these systems are being used more and more.

The research also demonstrated that LLMs often presume that individuals who speak African American English are likely to have less esteemed occupations than those who speak Standard English, even without knowledge of the speakers' racial backgrounds.

Fascinatingly, the study discovered that the bigger the LLM, the more it comprehended African American English, and it was likely to steer clear of overtly racist language. Nonetheless, the magnitude of the LLM had no impact on its hidden, subtle biases.

Hofmann warned not to view the reduction in blatant racism in LLMs as an indication that racial prejudice has been eliminated. He emphasized that the research shows a change in how racial bias is expressed in LLMs.

The conventional approach of educating extensive language models (LLMs) through human feedback has been found ineffective in dealing with hidden racial bias, according to the research.

Instead of reducing prejudice, this method might inadvertently teach LLMs to mask their inherent racial biases on the surface, while preserving them within a more profound layer.

Search for us on YouTube

Highlight Shows

Relevant Narratives

NVIDIA's Jensen Huang believes AI hallucinations can be fixed, and broad AI is expected in about 5 years

Apple has at last unveiled its MM1, a mixed AI model for creating text and images

Microsoft brings aboard Mustafa Suleyman, DeepMind's cofounder, to head its new consumer AI division

Samsung and Rebellions, South Korean chip producers, aim to outcompete NVIDIA

AI hallucinations can be resolved, and comprehensive AI is predicted to be here in roughly 5 years, says NVIDIA's Jensen Huang

Apple has recently introduced its MM1, a combined AI model for generating text and images

Microsoft employs Mustafa Suleyman, DeepMind's cofounder, to oversee their new AI team for consumers

South Korean chip makers, Samsung and Rebellions, intend to surpass NVIDIA

Available on YouTube

Firstpost holds all rights reserved, copyright © 2024.

+ There are no comments

Add yours